|

Johnny, Panam, Vixen

|

This is the first essay I've written on Cyberpunk 2077; I doubt it will be the last – indeed, I hope it won't, because if it is that will mean the game has failed for me. I'm currently fifteen hours in, and, at this moment, I have to say that it's on it's way towards failing. And the question you may well ask, is 'why?'

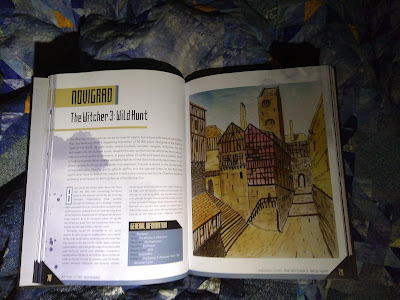

Well, essentially, because, rather than being a wholly new game, this is, essentially, an add-on pack or downloadable content for The Witcher III. It uses the same engine, but far more than that, it uses the same mechanics and the same structure. And the mechanics which were excellently tuned for the wide open spaces of Velen or the relatively small urban environments of Oxenfurt anf Novigrad do not work well in a sprawling metropolis like Night City.

Traversing the space

You can, of course, walk everywhere. It's possible. This is made more bearable by the fact that there really are a lot of interesting people – non-player-characters – on the streets, and that, although the same models do get reused, there are sufficient of them that it rarely becomes too obvious. And the architecture truly is epic; it's a great city for sight-seeing. But the distances are very considerable.

In the Witcher III there was one 'point of interest' every two minutes or so of walking time. That lead to some absurdities, of course, such as inhabited villages existing within a couple of hundred metres of the lair of a monster which is a challenging fight for an experienced witcher. In Night City, too, civilian life carries on within very short distances of acts of gross violence, but the points of interest in Night City are very much sparser than in Velen.

In any case the scenery of the Witcher III is just so gobsmackingly beautiful that it's a pleasure for me to be in, even if doing nothing. Riding slowly right across Velen from the Nilfgardian camp in the south east to the Pontar delta in the north west, on a quiet evening, for no particulat purpose, is a joy in itself.

I'm sure there will be some people for whom the cityscape of Night City will have an equal attraction; even I, a rural person, do enjoy it. But it's a much more difficult landscape to navigate, because there are few long views, and many routes which look obvious on the map are in fact blocked by structures.

But, given the sparsity of points of interest and the overall size of the map, if you walk, you're going to do an awful lot of just walking.

Of course, you're not expected to just walk. You're expected to drive. But there are no cars in the Witcher III; the mechanic simply isn't there. Consequently, to implement cars in Cyberpunk, CD Projekt took the boat code from the Witcher III and repurposed it. Cars in Cyberpunk use exactly the same controls as boats in Witcher III, and behave in exactly the same way, at least if you're using a keyboard; with just one important difference.

The helm on the Witcher III's boats has three positions: hard over to port, dead centre, and hard over to starboard. Similarly, the steering on Cyberpunk cars has three positions: full lock left, straight ahead, and full lock right. The Witcher III's boats have two speeds, slow for navigating close to hazards, and somewhat faster when covering long distances. And this, though not a good model of sailing boat physics, works well enough in marine environments which have few obstacles and no pedestrians.

Cyberpunk's cars have three settings for speed, too: full acceleration, gently deceleration, full brakes.

The accelerator, the brake, and the steering on cars are analogue controls. They're analogue controls for a very good reason. Cars operate in complex environments with many obstacles: pedestrians, kerbs, other cars, barriers and so on.

Computerised control systems can do very precise navigation of quadcopters which have motors which can only be on or off, by using what is called pulse-width modulation; that is, essentially, they spam the on-off switch of the motor very rapidly so that it's on for just sufficient a proportion of the time to output the power that would be output by an appropriately set analogue conteol. Similarly, it is at least theoretically possible that someone with enough patience, dedication, manual dexterity and tolerance of repetitive strain injury could learn to rattle the W, A, S and D keys sufficiently rapidly and sufficiently precisely to emulate analogue control of a Cyberpunk car; but if you're a mere mortal, forget it. The cars are undrivable.

Of course it's possible that a game controller will do this better; most game controllers have at least two analogue sticks, and I'd hope that this is sufficiently well handled that with a controller you do actually get analogue control of speed and steering. If that is so, the cars will be drivable, and the whole game will be a different experience. But if that is so, CD Projekt should really have said "this game is not playable with keyboard and mouse, you must use a controller".

Here in the real world, in 2020, Tesla's cars can follow a lane, and can avoid hitting obstacles in front of them, without any input from the driver. In Witcher III, horses, like Teslas, won't ram themselves into solid obstacles or leap over cliffs, although they will mow down pedestrians without a second thought. You may reasonably ask why CD Projekt based Cyberpunk cars on Witcher III boats rather than on Witcher III horses. I don't know; it would have seemed an obviously better solution, but for some reason they didn't.

While we're on this, just as Cyberpunk's cars are essentially Witcher III's boats, so the rapid travel stations are essentiallyjust Witcher III signposts. I believe that Night City's monorail system – NCART – was in fact pretty much fully implemented – we saw it in action in one of the early trailers – and it would have made a much less immersion-breaking way to get around; but it's been pulled from the final game. In my opinion that's a shame.

I should confess that I haven't actually used rapid travel, just as I don't in The Witcher III. It's immersion-breaking, and I just prefer not to.

Story and Characters

Game mechanics are not what a CD Projekt game is all about; it is, or should be, all about character and story. I only raise mechanics at such length here because they are so intrusive that they make getting to the story unreasonably difficult.

But even in character and story, my first fifteen hours have been underwhelming. Interesting, likeable characters have been introduced – and then killed off. Not because some failure of mine got them killed, but because their death is required by the game. Of your three companions in the critical early-plot quest 'The Heist', one inevitably dies; one, in my play-through, has disappeared and I do not know whether they will have any further part in the game; and one (also, it seems, inevitably) double-crossed and attempted to murder me – so it's unlikely we're going to be friends again.

The client on whose behalf the four of us were carrying out this quest has also disappeared, and although I suspect this will probably not prove permanent, she isn't around just now.

Great, startling, unexpected events have unfolded – have unfolded literally right in front of my eyes – but it does not seem to me that any actions or choices of mine had any impact on those events. At this stage, the main plot feels like a juggernaut, carving an unalterable pre-determined course through the world, unresponsive to anything I do.

So I'm left with, as characters I'm interested in, Victor Vector, a cheerful, friendly and well intentioned ripper doc; Misty, a slightly mystic-meg-new-age-hippy-fae tarot reading shopkeeper who is Vector's landlord; the obviously bright but equally obviously vulnerable and damaged Judy, and... yes, that's about it. And I don't – yet – know any of these characters well.

Of course I've met Johnny Silverhand, and through his memories I've sort-of met Kerry Eurodyne; I've met Rogue, both in Johnny's memories and in my own explorations; I've met T-Bug, but I've no idea whether she plays any further part of the story (I'll be disappointed but not surprised if she doesn't); I've met Panam, who I know from trailers will become important; I've met Clare, the bartender from Afterlife; I've met Jackie's mother Mama Welles; I've met Meredith Stout...

I've added a wee extra piece about Meredith Stout and the other romanceable characters at the end of this essay, since to say what I need to say about her involves spoilers.

Combat and Tactics

One plays role-playing games to play a role. The role I'm playing is a girl I call Vixen. She's small and not strong, so, contrary to Night City's brash style, she usually dresses simply and inconspicuously; she uses persuasion where she can, and tech and stealth where that doesn't work. Where stealth fails, she uses a sword for (relative) quietness. She has a clear moral code, or at least one that seems clear to her.

At least, that was the plan. In practice, it mostly hasn't worked. The tech skills so far have not felt engaging or especially useful – I've disabled a lot of security cameras, but mostly too late to be useful; and I've distracted a few enemies by hacking things in the environment. But the modal nature of scanning makes it artificial and not very free-flowing, and the decode minigame is just a nuisance.

Stealth is also underwhelming. I've put a lot of my skill and perk points into stealth, and I work hard at staying low and keeping out of sight. But it rarely gets you where you need to get to, and usually you end up in a fire fight.

In the early game, the Black Unicorn blade – which you get as a bonus if you bought both The Witcher III and Cyberpunk from CD Projekt's own online game store, GOG.com – is a really effective weapon, so that, in fact, you can take a sword to a gunfight and have a real chance of winning; however, with the first person view, sword play doesn't really work. You don't have good tactical awareness; when your oponent isn't in your relatively limited field of view, it's hard to know where, and how close, they are. Having said that, you can get quite a long way by just rushing around the fight scene in a random fashion slashing wildly all the time. This, with regular use of the health-boosting inhaler, is reasonably effective even when you're up against half a dozen goons with assault rifles. But this isn't role play. It feels wrong.

While on this, I normally have Vixen wear full length jeans and a very high grade bullet proof vest. She's understated because she doesn't want to attract attention, because she wants to be underestimated by any attention she does attract. But I have aquired along the way a skimpy sequinned crop-top and a pair of vestigial shorts which actually have higher armour statistics than those. Again, it makes no sense; it perturbs the willing suspension of disbelief.

The 'health inhaler' is, of course, your Witcher swallow potion. One of the benefits of the 700 or so years which have passed between the action of Witcher III and of Cyberpunk is that swallow no longer has cumulative toxicity, so you can use it frequently during a fight (provided you have enough of it, and I never ran out) to soak up ludicrous amounts of damage.

Finally, there are bugs. There are not nearly as many bugs (at least on PC) as some commercial revieweers have suggested, but there are a few that are plot breaking; there is no solution other than going back to a previous save, and doing something entirely different. You can't abort what you were doing, go and do something else to gain more experience and skills, and come back later.

I've seen one non-player character in a 'T' pose. Some of the tarot card murals that I'm supposed to see aren't visible to me, although the game checks them off to say I've seen them. On a couple of occasions, the audio of dialogue ran hugely behind the animation and subtitles. This is not bad for a just-released game; of course it isn't perfect.

Conclusion

In conclusion, bear in mind that I'm not really the target audience for this game; I'm not a great fan of cyberpunk as a genre, the present is easily a dark enough future for me. I don't like guns. I don't like killing people even if they 'deserve' it; I'd much prefer to settle conflict by negotiation. The poverty of non-player character repertoire in modern video games, as I've written ad nauseam, really disappoints me, because I really feel we now have the technology to do so much better.

I bought into this game because I have faith in CD Projekt as story tellers. And I do think there is great story here – there's certainly the potential for it. But at this present moment, after fifteen hours of play, I'm not really getting enough story reward to compensate for all the awkward, clunky mechanics. I am not talking about bugs here –as I've acknowledged, there are bugs, but none I've seen is significant. The problem is not in bugs, but in intended features which just don't work well.

Again, the main problem I'm talking about is the cars. It may be that, with a controller, the problem doesn't arise. I mean to find out. After all, there is plenty of video – not only in CD Projekt's trailers – of people driving around Night City reasonably smoothly. But it really wouldn't be rocket science for CD Projekt to 'Teslaise' the cars in a future update, so as to give them intelligent lane following and collision avoidance. If they did that, this would become a good game.

Here be Spoilers: Romance in Night City

OK, spoiler time. If you don't want spoilers read no further.

Right, you're sure you want to read on?

A CD Projekt game would not be a CD Projekt game without sexual relationships. In the trailers CD Projekt strongly hinted at who the romanceable characters –– the love interests –– would be.

Judy is definitely one, and she's the one I'm most interested to explore. But Meredith Stout, the hard as nails corporate agent, was clearly trailed as another. It turns out – how can I put this politely? – she's a slightly updated version of a Witcher I sex card. As relationships go, I've seen deeper and more meaningful puddles of vomit. That's five minutes of my life (because I swear it wasn't longer) that I shan't get back again.

For the rest, my guesses (these are guesses, not knowledge) are that

- Victor Vector is romancable if you're playing a straight woman, and possibly also if you're playing a gay or bi man;

- Kerry Eurodyne is romanceable in all cases;

- Panam is romanceable but I suspect only by straight men;

- Clare, the bartender from Afterlife, may be romanceable.

Other possibles are Misty the fae head-shop keeper, but I don't think so, and T-Bug, who would be really interesting but is I suspect no longer in the game.

Obviously, Night City has a very large number of sex workers, but my guess is that none of them is romanceable in any more meaningful sense than Meredith Stout is. I don't, as yet, know for sure that any of the romance options have any depth to them, although dear God one would hope so. For completeness, Meredith definitely swings both ways.

Johnny Silverhand is definitely not romanceable in the conventional sense since he only exists in your head; but I think we will (I haven't yet) at some point relive his memories of sex with Altiera Cunningham (who is also either dead or existing as a digital consciousness somewhere).