If you interview three witnesses to some real life event, you'll get three accounts; and its highly likely that those accounts will be - possibly sharply - different. That doesn't mean that any of them are lying or are untrustworthy, it's just that people perceive things differently. They perceive things differently partly because they have different viewpoints, but also because they have different understandings of the world.

Furthermore, there isn't a clear divide between an honest account from a different viewpoint and outright propaganda; rather, there's a spectrum. The conjugation is something like this:

- I told the truth

- You may have been stretching the point a little

- He overstated his case

- They were lying

A digression. I am mad. I say this quite frequently, but because most of the time I seem quite lucid I think people tend not to believe me. But one of the problems of being mad - for me - is that I sometimes have hallucinations, and I sometimes cannot disentangle in my memory what happened in dreams from what happened in reality. So I have to treat myself as an unreliable witness - I cannot even trust my own accounts of things which I have myself witnessed.

I suspect this probably isn't as rare as people like to think.

Never mind. Onwards.

The point of this, in constructing a model of news, is we don't have access to a perfect account of what really happened. We have at best access to multiple contested accounts. How much can we trust them?

Among the trust issues on the Internet are

- Is this user who they say they are?

- Is this user one of many 'sock puppets' operated by a single entity?

- Is this user generally truthful?

- Does this user have an agenda?

Clues to trustworthiness

I wrote in the CollabPRES essay about webs of trust. I still think webs of trust are the best way to establish trustworthiness. But there have to be two parallel dimensions to the web of trust: there's 'real-life' trustworthiness, and there's reputational - online - trustworthiness. There are people I know only from Twitter whom nevertheless I trust highly; and there are people I know in real life as real people, but don't necessarily trust as much.If I know someone in real life, I can answer the question 'is this person who they say they are'. If the implementation of the web of trust allows me to tag the fact that I know this person in real life, then, if you trust me, then you have confidence this person exists in real life.

Obviously, if I don't know someone in real life, then I can still assess their trustworthiness through my evaluation of the information they post, and in scoring that evaluation I can allow the system to model the degree of my trust for them on each of the different subjects on which they post. If you trust my judgement of other people's trustworthiness, then how much I trust them affects how much you trust them, and the system can model that, too.

|

| Alleged follower network of Twitter user DavidJo52951945, alleged to be a Russian sock-puppet |

Also, a news system based in the southern United States, for example, will have webs of trust biased heavily towards creationist worldviews. So an article on, for example, a new discovery of a dinosaur fossil which influences understanding of the evolution of birds is unlikely to be scored highly for trustworthiness on that system.

I still think the web of trust is the best technical aid to assessing the trustworthiness of an author, but there are some other clues we can use.

|

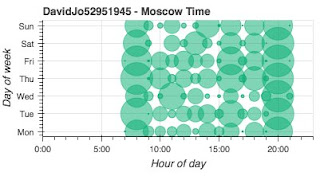

| Alleged posting times of DavidJo52951945 |

But modern technology allows us to get the location of the device from which a message was sent. If Twitter required that location tracking was enabled (which it doesn't, and, I would argue, shouldn't), then a person claiming to be tweeting from one location using a device in another location would be obviously less trustworthy.

There are more trust cues to be drawn from location. If the location from which a user communicates never moves, then that's a cue. Of course, the person may be housebound, for example, so it's not a strong cue. Equally, people may have many valid reasons for choosing not to reveal their location; but simply having or not having a revealed location is a cue to trustworthiness. Of course, location data can be spoofed. It should never be trusted entirely; it is only another clue to trustworthiness.

Allegedly also, the user claims to have a phone number which is the same as a UKIP phone number in Northern Ireland.

Databases are awfully good at searching for identical numbers. If multiple users all claim to have the same phone number, that must reduce their presumed trustworthiness, and their presumed independence from one another. Obviously, a system shouldn't publish a user's phone number (unless they give it specific permission to do so, which I think most of us wouldn't), but if they have a verified, distinct phone number known to the system, that fact could be published. If they enabled location sharing on the device with that phone number, and their claimed posting location was the same as the location reported by the device, then that fact could be reported.

Another digression; people use different identities on different internet systems. Fortunately there are now mechanisms which allow those identities to be tied together. For example, if you trust what I post as simon_brooke on Twitter, you can look me up on keybase.io and see that simon_brooke on Twitter is the same person as simon-brooke on GitHub, and also controls the domain journeyman.cc, which you'll note is the domain of this blog.

So webs of trust can extend across systems, provided users are prepared to tie their Internet identities together.

The values (and limits) of anonymity

Many people use pseudonyms on the Internet; it has become accepted. It's important for news gathering that anonymity is possible, because news is very often information that powerful interests wish to suppress or distort; without anonymity there would be no whistle-blowers and no leaks; and we'd have little reliable information from repressive regimes or war zones.

So I don't want to prevent anonymity. Nevertheless, a person with a (claimed) real world identity is more trustworthy than someone with no claimed real world identity, a person with an identity verified by other people with claimed real world identities is more trustworthy still, and a person with a claimed real world identity verified by someone I trust is yet more trustworthy.

So if I have two stories from the siege of Raqqa, for example, one from an anonymous user with no published location claiming to be in Raqqa, and the other from a journalist with a published location in Glasgow, who is has a claimed real-world identity which is verified by people I know in the real world, and who claims in his story to have spoken by telephone to (anonymous) people whom he personally knows in Raqqa, which do I trust more? Undoubtedly the latter.

Of course, if the journalist in Glasgow who is known by someone I know endorses the identity of the anonymous user claiming to be in Raqqa, then the trustworthiness of the first story increases sharply.

So we must allow anonymity. We must allow users to hide their location, because unless they can hide their location anonymity is fairly meaningless (in any case, precise location is only really relevant to eye-witness accounts, so a person who allows their location to be published within a 10Km box may be considered more reliable than one who doesn't allow their location to be published at all).

Conclusion

We live in a world in which we have no access to 'objective reality', if such a thing even exists. Instead, we have access to multiple, contested accounts. Nevertheless, there are potential technical mechanisms for helping us to assess the trustworthiness of an account. A news system for the future should build on those mechanisms.

No comments:

Post a Comment